hnAI development cost in 2026 ranges from $5,000 for a basic proof of concept to $500,000+ for enterprise-grade platforms. But that range hides the real number: inference cost. A production AI system processing 100,000 daily requests at current Claude API pricing burns through $4,500/month in API calls alone. Development is the upfront check. Inference is the monthly subscription you cannot cancel. This guide breaks down both, shows where the 85% failure rate comes from, and gives you a 4-step framework to estimate your actual Year 1 budget.

Table of Contents

What Determines AI Development Cost in 2026?

AI development cost isn’t one number. It’s a function of four variables that compound against each other.

Complexity accounts for 30-40% of total project cost. A sentiment analysis chatbot that classifies text as positive/negative/neutral requires different architecture than a recommendation engine processing millions of user interactions. The first might use off-the-shelf models through an API. The second needs custom training, specialized infrastructure, and ongoing optimization.

Data preparation consumes 20-40% of first-time AI implementations. This is the cost most teams underestimate. Epoch AI’s research on frontier model development found that even at the GPT-4 training level, 29-49% of total cost went to R&D staff, much of that time spent cleaning, labeling, and structuring training data.

Infrastructure costs run 15-20% of development budget. Enterprise-scale cloud ML deployments (AWS SageMaker, Google Vertex AI) routinely exceed $500,000 annually for production NLP systems. That’s infrastructure alone. No staff, no development time.

Team composition drives the remaining variance. Data scientists in the US command $120,000-$180,000 annually. ML engineers run $130,000-$200,000. EU rates are 40-50% lower. Outsourcing to Eastern European AI development companies cuts these rates further while maintaining quality. ProductCrafters’ team delivers production AI at hourly rates 60% below Bay Area equivalents.

The compounding effect matters. A project with high complexity (custom model training), poor data readiness (extensive preparation needed), and an in-house US team can hit $500,000 before launch. The same functional outcome with API-first architecture, clean data, and an outsourced team might cost $80,000.

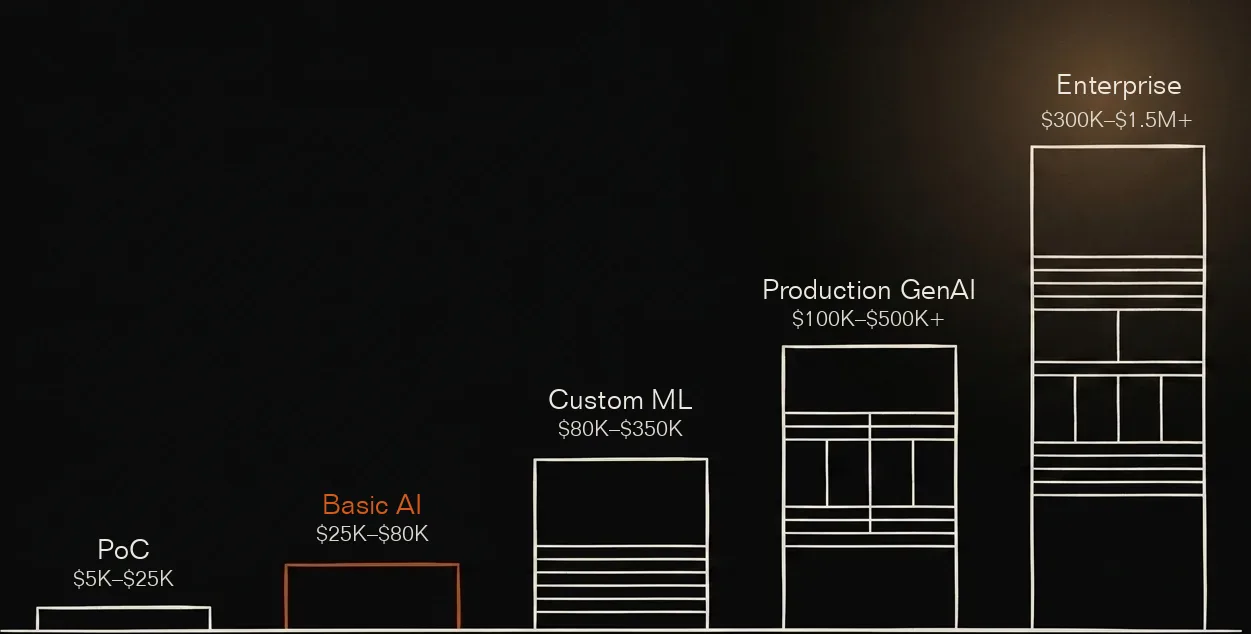

The Real Cost Ranges by Project Type

Cost ranges without context are useless. Here’s what each tier actually delivers, and the specific budget lines that make up each figure.

Proof of Concept: $5,000-$25,000

This tier gets you: a working prototype that answers one question—can AI solve this specific problem? Not a product. A test.

What’s included:

- Pre-built model integration (OpenAI, Claude, or Llama APIs)

- Single integration point (one data source or platform)

- Basic prompt engineering and testing

- 2-4 weeks development time

- Validation report with accuracy metrics

Real example: A basic AI chatbot for internal workflows lands between $5,000 and $15,000. Add a custom knowledge base and light UI, you’re at $20,000-$25,000.

Where PoC budgets go wrong: teams spend $50,000 building a polished demo instead of $15,000 validating the core hypothesis. A PoC should answer one question: can AI solve this specific problem with acceptable accuracy? Skip custom UI. Skip production infrastructure. Skip edge-case handling. If the answer is no, you saved $35,000. If yes, you have evidence to justify the full build.

Basic AI Product: $25,000-$80,000

This tier gets you: a production-ready AI feature for a specific use case. Think customer support chatbot with multiple knowledge sources, document classifier deployed in your workflow, or AI-powered search for your product.

What’s included:

- API integration with fallback handling

- Custom UI/UX design

- Multiple data source connections

- Production deployment and monitoring

- 4-8 weeks development time

This is where most SMB and startup AI projects land. You’re not building a platform—you’re adding AI capability to an existing product or workflow.

Custom Machine Learning System: $80,000-$350,000

This tier gets you: a trained model specific to your data and use case, deployed via API, with monitoring infrastructure.

What’s included:

- Custom model training on your dataset (requires 5,000-50,000+ labeled examples)

- Model serving infrastructure (typically cloud-hosted)

- API endpoints for integration

- Performance monitoring and logging

- 3-6 months development time

- MLOps pipeline for retraining

Real example: Production ML systems for healthcare applications run $300,000-$600,000. Finance hits $300,000-$800,000. Why so high? Compliance requirements (HIPAA, SOC2, PCI-DSS) and accuracy thresholds that tolerate zero margin for error.

Production Generative AI Application: $100,000-$500,000+

This tier gets you: a generative AI system (text, image, code, or multimodal) fine-tuned to your domain and scaled for production traffic.

What’s included at $100,000-$200,000:

- Fine-tuned foundation model (Claude, GPT-4, or open-source like Llama 3)

- Production infrastructure with auto-scaling

- Full-suite testing and safety guardrails

- Basic compliance documentation

- 4-8 months development time

What’s included at $300,000-$500,000+:

- Custom model training from scratch or extensive fine-tuning

- Enterprise-grade security and compliance

- Multi-region deployment

- Full MLOps stack and observability tooling

- 8-14 months development time

- Ongoing optimization team during launch

Enterprise AI Platform: $300,000-$1.5M+

This tier gets you: an AI system integrated across multiple business functions with organization-wide impact.

Kellton’s 2026 research pins enterprise AI at $300,000-$1.5 million upfront, plus 20-30% annual maintenance costs. A $1M build means $200,000-$300,000 per year to keep it running: model retraining, infrastructure updates, security patches, and performance tuning.

What pushes costs past $500,000:

- Multi-system integration. Connecting AI to SAP, Salesforce, and legacy databases requires $50,000-$150,000 in custom middleware and API development.

- Compliance certification. HIPAA certification adds $45,000-$100,000 in audit costs. SOC2 Type II runs $30,000-$80,000. Financial services regulations (PCI-DSS, GDPR) compound further.

- Global deployment. Multi-region infrastructure for latency and data residency requirements adds $100,000-$200,000 to initial build plus ongoing costs.

- Change management. Training 500+ employees to use AI tools effectively costs $50,000-$100,000 in workshops, documentation, and support.

The Hidden Cost Most Guides Skip: Running Your AI

Development is the upfront payment. Inference is the subscription.

Most AI cost guides focus obsessively on development ranges while burying inference costs in a single paragraph. This framing misleads buyers. A $100,000 development project with $20,000/month inference costs is actually a $340,000 Year 1 investment. The guides that hide this arithmetic are optimizing for lead generation, not buyer education.

Current API pricing (April 2026):

| Model | Input (per MTok) | Output (per MTok) |

| Claude Sonnet 4.7 | $3.00 | $15.00 |

| Claude Opus 4.7 | $5.00 | $25.00 |

| GPT-5.4 | $2.50 | $15.00 |

| GPT-4o | $2.50 | $10.00 |

Now let’s do the math most cost guides skip.

Scenario: Customer support AI handling 100,000 queries daily

- Average query: 150 tokens input, 400 tokens output

- Daily tokens: 15M input + 40M output = 55M total

- Using Claude Sonnet: (15 × $3) + (40 × $15) = $45 + $600 = $645/day

- Monthly inference cost: $19,350

Scenario: Internal knowledge assistant with 1,000 daily users

- Average: 10 queries per user, 200 tokens input, 500 tokens output per query

- Daily tokens: 2M input + 5M output

- Using GPT-4o: (2 × $2.50) + (5 × $10) = $5 + $50 = $55/day

- Monthly inference cost: $1,650

The first scenario burns through more than most teams’ entire annual AI budget in 6 months of operation. The second is manageable. Huge difference. Same AI. Different usage pattern.

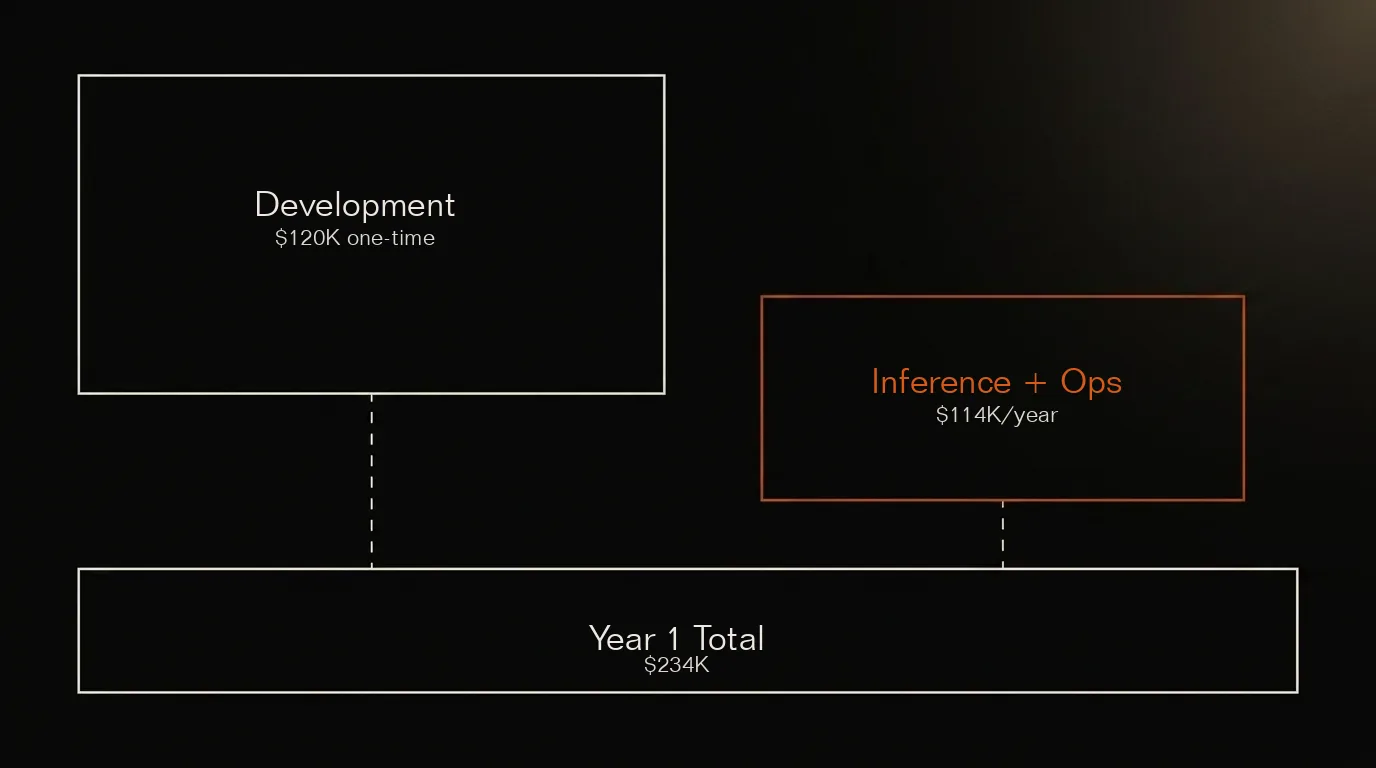

Worked example: Year 1 vs Year 2 total cost

Consider a $120,000 customer support AI build:

| Cost Component | Year 1 | Year 2 |

| Development | $120,000 | $0 |

| Inference (at 50K queries/day) | $72,000 | $72,000 |

| Infrastructure | $18,000 | $18,000 |

| Maintenance (20% of dev) | $24,000 | $24,000 |

| Model updates | $0 | $35,000 |

| Total | $234,000 | $149,000 |

Year 1 cost: nearly double the development budget. Year 2 cost: still $149,000 with no new features. The “cheap AI chatbot” that cost $120,000 to build requires $383,000 over two years. Budget accordingly.

Cost optimization strategies that actually work:

- Prompt caching: Both Anthropic and OpenAI offer ~90% discounts on cached prompts. System prompts that repeat across queries should be cached.

- Batch processing: 50% discount for non-real-time workloads. If your use case tolerates 24-hour latency, halve the bill.

- Model tiering: Use Claude Haiku ($1.00/$5.00 per MTok) or GPT-4o-mini for routine classification, summarization, and extraction where accuracy requirements are lower. Route only complex queries to expensive models.

- Fine-tuning tradeoff: Fine-tuned smaller models can outperform prompted large models at 10x lower cost. But only for narrow tasks.

Why 85% of AI Projects Fail (And How to Avoid the $100K+ Mistake)

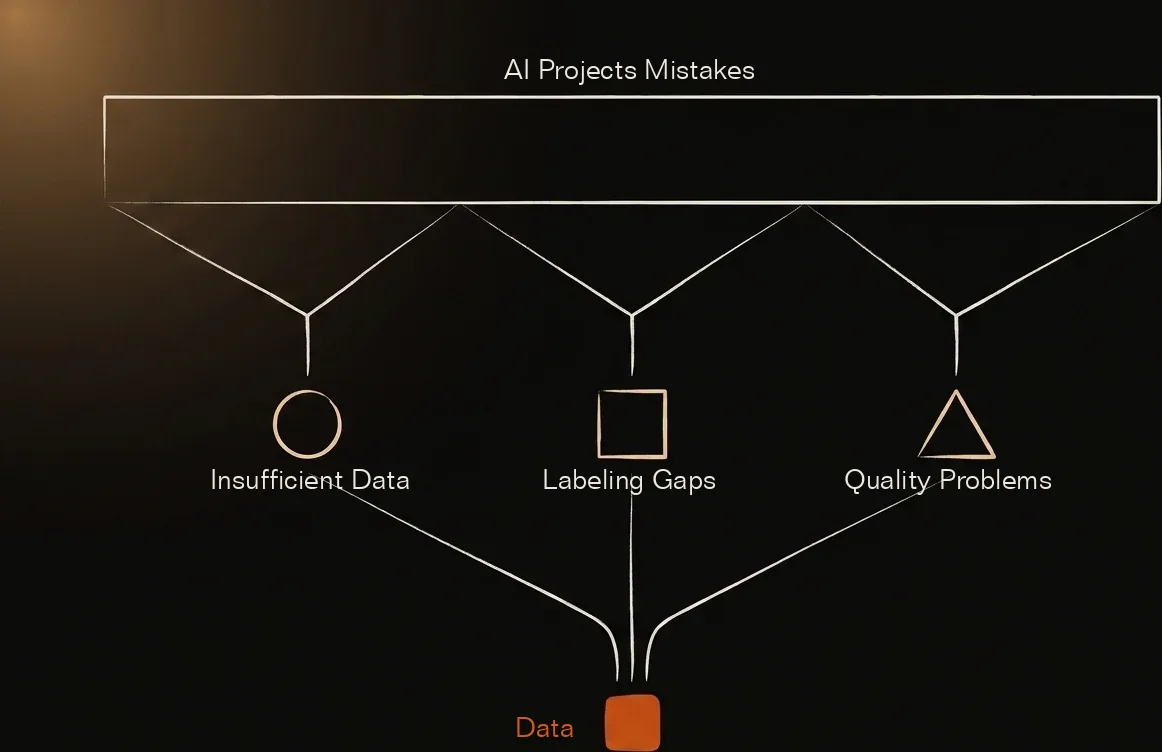

The 85% failure statistic comes from Gartner’s forecast that from 2018-2022, most AI projects would fail to deliver value. That forecast proved accurate. But the cause wasn’t AI complexity.

Gartner’s February 2025 update sharpens the diagnosis: 60% of AI projects will be abandoned by 2026 if unsupported by AI-ready data. The bottleneck isn’t algorithms. It’s data.

The three data quality failures that kill AI projects:

- Insufficient volume. Most businesses lack sufficient training data for their intended AI applications. A recommendation engine needs millions of interaction records. Fraud detection needs tens of thousands of labeled examples. Most organizations have neither.

- Labeling gaps. Data annotation for 100,000 samples requires 300-850 hours of human work. That’s before any engineering begins. At $30/hour for skilled annotators, the bill runs $9,000-$25,500.

- Quality problems. 66% of companies encounter errors or biases in training datasets. Models trained on dirty data produce unreliable outputs. Garbage in, garbage out. At $300,000 scale. A financial services firm discovered their fraud model was trained on data where 40% of “fraud” labels were actually disputes—different category, different pattern. The model worked perfectly in testing and failed completely in production. Cost to rebuild: $180,000.

The de-risking approach:

Before committing $100K+ to AI development, spend $15,000-$30,000 on a data readiness assessment. This should answer:

- Do you have enough data for the intended use case?

- What labeling and cleaning work is required?

- What’s the realistic timeline to data readiness?

- Is an API-first approach viable given your data constraints?

Build vs. Buy: When Custom AI Development Makes Sense

The cost calculus for custom AI development shifted dramatically between 2024 and 2026.

API-first is now the default. Claude and GPT-4 class models, accessible via API at $2.50-$5.00 per million tokens, handle 80% of text-based AI tasks that would have required custom development three years ago. Building custom means competing with foundation models trained on more data than any single organization can assemble.

Custom development still wins under three conditions:

- Narrow tasks with proprietary data. If you have 50,000+ labeled examples for a specific classification or extraction task, fine-tuning a smaller model beats prompting a larger one in cost, latency, and accuracy.

- Regulatory constraints. HIPAA, SOC2, and certain financial regulations require data to stay on-premises or in specific regions. External API calls may be prohibited. Healthcare organizations processing PHI through external APIs face $50,000-$1.9M HIPAA violation fines per incident. FINRA-supervised institutions often cannot use third-party AI APIs at all. No exceptions. For these organizations, the $150,000 custom deployment isn’t optional—it’s the only legal path.

- Unit economics at scale. At 10M+ daily requests, the math flips. A fine-tuned Llama model on your own infrastructure costs a fraction of API calls at that volume. One fintech client switched from GPT-4 API to self-hosted Llama 3 at 15M daily requests and cut inference costs from $180,000/month to $35,000/month. The $150,000 migration project paid for itself in 45 days.

The breakeven calculation:

API-first approach at 50,000 daily requests:

- Claude Sonnet: ~$100/day = $3,000/month = $36,000/year

Custom fine-tuned model (Llama 3):

- Development: $80,000-$150,000

- Infrastructure: $2,000-$5,000/month

- Breakeven: 18-36 months

For most organizations, the API-first approach wins on risk-adjusted terms. Custom models demand multi-year commitment before ROI. APIs let you pivot anytime.

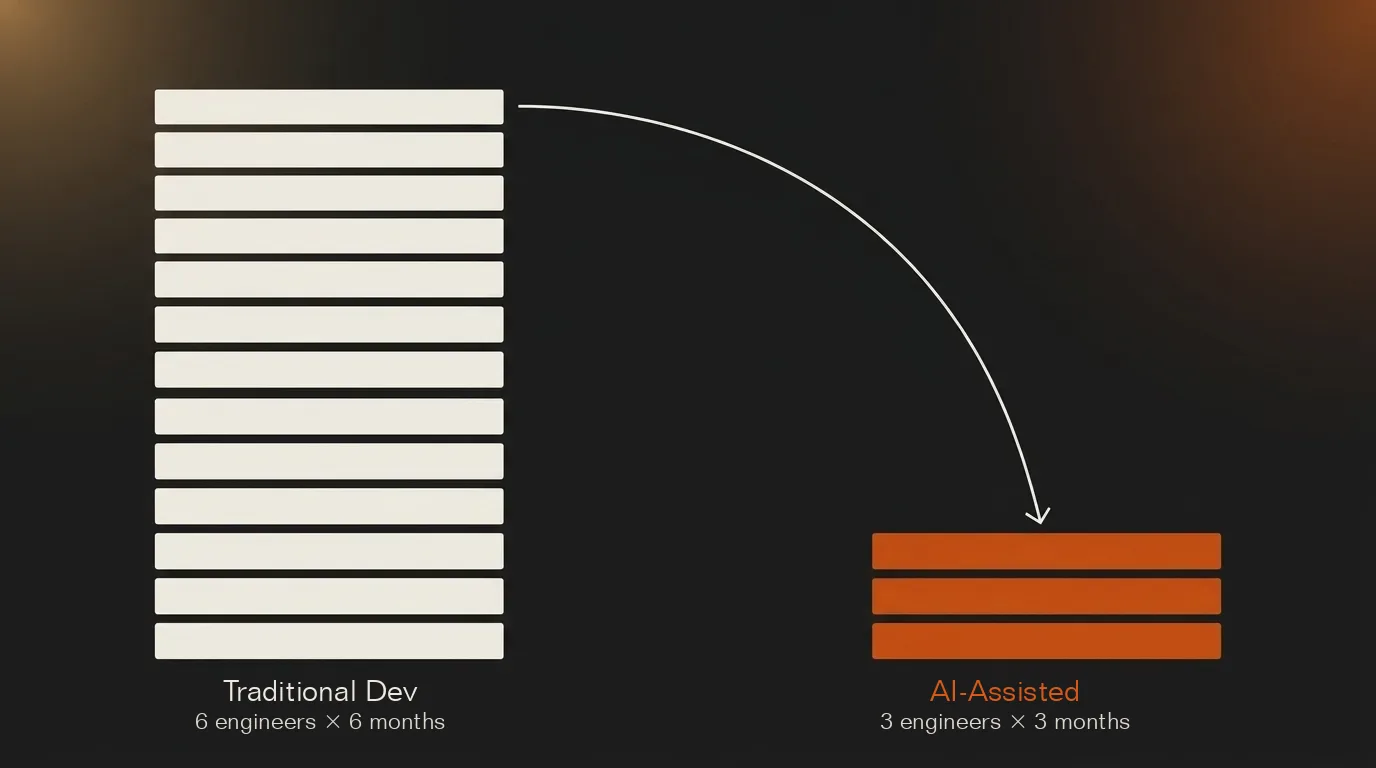

How AI Tools Are Reducing AI Development Cost

The irony of 2026 AI economics: the tools that cost money to run are simultaneously reducing the cost to build.

One developer documented a 7-week AI development sprint using Claude Code. The output: 219,000 lines of code across 8 projects. Using COCOMO estimation (a standard software cost model), this equates to $7-8 million of engineering output.

Not a typo. Not exaggerated. AI-assisted development is producing 10-50x productivity multipliers on certain coding tasks.

The practical impact on AI development cost:

- Boilerplate acceleration. API integrations, data pipeline scaffolding, and testing harnesses that took days now take hours. Sometimes minutes.

- Reduced iteration cycles. Prototype-to-production timelines compress when AI assists with refactoring and optimization.

- Smaller teams. Three engineers with strong AI tooling match six without it.

The implication: the $80,000 custom ML project of 2024 may be achievable for $40,000-$50,000 in 2026. Half the cost. Same output. Assuming the team has adopted the tools effectively.

The tooling shift changes vendor selection math. In 2024, hiring a team with deep ML expertise justified premium rates. In 2026, a smaller team with strong AI-assisted development practices often delivers faster than a larger traditional team. When evaluating AI development vendors, ask: what percentage of their engineers use AI coding assistants daily? Teams stuck on 2024 workflows charge 2024 prices for 2024 productivity.

Common Mistakes That Inflate AI Development Cost

These four budget errors account for most cost overruns in AI projects.

Mistake 1: Skipping the proof-of-concept. Jumping straight to full development without validating feasibility wastes $100,000-$300,000 when the AI can’t actually solve the problem. A $15,000-$30,000 PoC over 4-6 weeks proves (or disproves) the approach before major commitment. Skipping this step is the single most expensive shortcut in AI development. Don’t.

Mistake 2: Underestimating data preparation. Teams budget 5-10% for data work when reality demands 20-40%. A healthcare AI project in 2025 spent $180,000 on data cleaning and labeling—double the original estimate—before model training even began. The fix: build in a 2x buffer for data costs.

Mistake 3: Ignoring inference economics. Development-focused budgets miss the subscription cost of running AI in production. A $75,000 chatbot build with $8,000/month inference costs becomes a $171,000 Year 1 investment. Calculate inference costs before signing development contracts.

Mistake 4: Over-engineering v1. First versions don’t need multi-region deployment, custom model training, or enterprise-grade observability. These add $50,000-$150,000 to initial builds. Ship a working v1 with basic infrastructure. Add sophistication after validating product-market fit. A startup we worked with spent $200,000 building enterprise-grade infrastructure for an AI product that never found users. Ship lean. Scale later.

How to Estimate Your AI Budget: The 4-Step Framework

Stop guessing at ranges. Use this framework to calculate your actual Year 1 AI investment.

Step 1: Define scope and tier

Which tier does your project fall into?

- PoC/Basic ($5K-$75K development)

- Custom ML ($80K-$350K development)

- Production GenAI ($100K-$500K+ development)

- Enterprise platform ($300K-$1.5M+ development)

Most organizations should start with PoC and graduate. Jumping straight to enterprise-scale is the $100K+ mistake.

Step 2: Assess data readiness

Answer honestly:

- Do you have 10,000+ examples for training/fine-tuning?

- Is your data labeled, cleaned, and structured?

- Can you access it programmatically?

If you answered “no” to any: add 30-50% to your development budget for data preparation, or consider API-first approaches that don’t require custom data.

Step 3: Calculate total cost of ownership

| Cost Component | Your Estimate |

| Development (from Step 1) | $ |

| Data preparation (if needed) | $ |

| Infrastructure (Year 1) | $ |

| API/inference costs (Year 1) | $ |

| Maintenance (15-30% of dev) | $ |

| Total Year 1 | $ |

Step 4: De-risk before committing

Before signing a $150,000 AI development contract, spend $15,000-$30,000 on a scoped assessment that delivers:

- Validated data readiness

- Technical architecture recommendation

- Build vs. buy decision

- Realistic timeline and budget

ProductCrafters offers this as a 2-week AI Launch Sprint, a fixed-scope engagement that prevents the $100K+ mistakes caused by skipping due diligence.